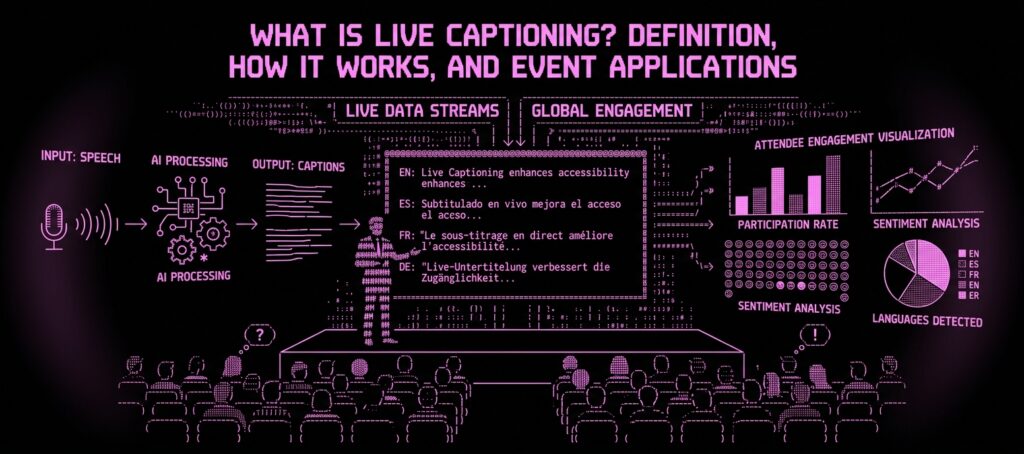

Live captioning is the real-time conversion and display of spoken words as synchronized text during events, broadcasts, meetings, or presentations, enabling people who are deaf or hard of hearing to follow spoken content and improving comprehension for all attendees including non-native speakers and those in noisy environments. Live captions appear on screens, within video players, on personal devices, or as overlays on livestreams within 1-5 seconds of the words being spoken.

The global closed captioning services market was valued at approximately $370 million in 2024 and is projected to reach $850 million by 2033, growing at a 9.7% compound annual growth rate (Data Horizon Research, 2025). This growth is driven by expanding regulatory requirements, including the April 2026 ADA Title II deadline requiring WCAG 2.1 Level AA compliance for all digital content produced by government entities, and by growing attendee expectations that live captioning is a standard event feature, not an optional accommodation.

Live Captioning Defined

Live captioning sits within a family of related services that event professionals often confuse.

- Live captioning is the broad term for any real-time text display of spoken content, encompassing human stenography, voice writing, and AI-powered automatic speech recognition.

- CART (Communication Access Realtime Translation) is a specific type of live captioning performed by certified stenographers. CART is the gold standard for accuracy (98-99%) and is the preferred accommodation under the ADA.

- Closed captioning refers to captions that viewers can toggle on or off. Standard for pre-recorded video and most virtual event platforms.

- Open captioning refers to captions that are permanently visible and cannot be turned off. Used when captions must be visible to all attendees.

- Subtitles are typically associated with pre-recorded content and may be simplified or condensed.

The distinction matters for compliance. ADA and FCC requirements specify “effective communication,” which for many events means CART-quality captioning, not just any form of text display.

How Live Captioning Works

Human Captioning (CART/Stenography)

A trained CART provider uses a stenotype machine to capture speech phonetically at 200-250+ words per minute. Specialized software converts the phonetic shorthand into readable text.

- Accuracy: 98-99% (NCRA certification requires minimum 96%)

- Latency: 2-4 seconds

- Output: Verbatim text including speaker identification

- Limitations: Typically English-only per provider. Requires clean audio feed.

Voice Writing (Respeaking)

A trained voice writer listens to the speaker and repeats everything into a microphone connected to speech recognition software, adding punctuation commands and speaker identification verbally.

- Accuracy: 95-98% with trained voice writers

- Latency: 3-6 seconds

- Limitations: Single language, dependent on voice writer’s proficiency with subject matter

AI-Powered Automatic Captioning

Machine learning models process audio in real time using automatic speech recognition (ASR). Providers include built-in features in Zoom, Teams, Google Meet, and standalone services like Otter.ai, Rev, and Verbit.

- Accuracy: 80-95% in controlled conditions. Under real-world event conditions, 70-85%. Independent testing found an average of 61.92% accuracy under uncontrolled conditions (Sonix, 2025).

- Latency: 1-3 seconds

- Advantages: Low cost, multilingual support (some platforms caption in 30-100+ languages), no human scheduling required

Hybrid Captioning (AI + Human Editor)

- Accuracy: 95-98%

- Latency: 3-8 seconds

- Advantages: Lower cost than full CART with accuracy approaching CART quality

The right method depends on the audience and compliance requirements. For events with deaf attendees who rely on captions as their primary communication mode, CART provides the accuracy they need. For general accessibility, AI captioning offers a cost-effective baseline. For many organizations, hybrid captioning hits the sweet spot.

Live Captioning for Events: Why It Matters

Legal Compliance

Multiple laws and regulations require or strongly encourage live captioning at events.

- ADA: Requires “effective communication” for people with disabilities at events covered by Title II and Title III. The April 2026 DOJ rule requires WCAG 2.1 Level AA compliance for digital content.

- FCC: Requires captioning for video programming distributed on television and increasingly on internet platforms.

- Section 508: Requires federal agencies to make electronic and information technology accessible, including captioned video.

- State and local laws: Many states (California, New York, Illinois, Massachusetts) have additional accessibility requirements.

Digital accessibility lawsuits hit 4,187 in 2024 and were tracking 37% higher through 2025.

Attendee Impact

The impact extends far beyond the deaf and hard-of-hearing population, though that population is substantial: 15% of American adults (37.5 million people) report some degree of hearing loss.

Who Benefits

- Non-native speakers (reading + hearing improves comprehension)

- Attendees in noisy environments (expo halls, large ballrooms)

- Visual information processors

- Remote attendees with unreliable audio

Content Value

Captions produce a text record of every session, becoming the foundation for searchable archives, blog posts, social media excerpts, training materials, and compliance documentation. Research shows captions improve information retention by 12% even for hearing audiences (University of South Florida).

Live Captioning Costs and Pricing

Human CART

- On-site CART: $150-$300 per hour. Most providers require a 2-hour minimum.

- Remote CART: $125-$250 per hour.

- Two-day conference (8 hours/day, single track): $2,000-$4,800

- Two-day conference (8 hours/day, 3 tracks): $6,000-$14,400

AI Automatic Captioning

- Built-in platform captions (Zoom, Teams, Google Meet): Included with paid plans at no additional cost

- Standalone AI captioning services: $50-$500 per event

- Enterprise event captioning: $500-$2,000 per event for platforms with custom vocabulary and multi-language support

Hybrid (AI + Human Editor)

- Rate: $100-$300 per hour

- Two-day conference (single track): $1,600-$4,800

The 10-50x cost difference between AI and human captioning is the central tension in the industry. AI captioning makes live captions accessible for every session at every event. Human CART provides the accuracy that certain attendees need. The most inclusive approach is AI captioning for all sessions with human CART available upon request.

How to Choose a Live Captioning Solution

- Compliance requirements: Are you subject to ADA, Section 508, or state accessibility laws? CART is the safest choice for compliance.

- Audience needs: Will deaf or hard-of-hearing attendees rely on captions as their primary communication mode? If yes, human CART is strongly recommended.

- Language requirements: Do you need captions in multiple languages? AI platforms support 30-100+ languages. Human CART is typically English-only.

- Budget: For limited budgets, AI captioning provides basic accessibility. As budget allows, upgrade to hybrid or full CART for critical sessions.

- Platform integration: Does the captioning service integrate with your event platform, virtual meeting software, or AV setup? Test before the event.

Best practice: Include an accessibility field on the event registration form asking attendees to specify their needs at least two weeks before the event. This allows you to provide CART for attendees who request it while using AI captioning as a default for all sessions.

Live Captioning vs. CART Services

CART is a type of live captioning. All CART is live captioning, but not all live captioning is CART.

- CART specifically refers to stenographic captioning by a certified provider using a stenotype machine. It is a professional service with certification standards (NCRA), accuracy benchmarks (96%+ required), and legal recognition as an ADA accommodation.

- Live captioning is the broader category that includes CART, voice writing, AI captioning, and hybrid approaches.

When an attendee or regulatory body requests “CART services,” they are requesting human stenographic captioning specifically. When they request “live captioning,” any method that provides accurate, real-time text may satisfy the requirement.

Live Captioning and Event Technology

Live captioning is evolving from a standalone accessibility service into an integrated component of event content intelligence.

Traditional captioning produces a text stream that appears on screens and disappears when the session ends. Modern approaches treat the caption text as structured data that feeds downstream applications.

AI-powered event platforms like Snapsight integrate captioning with translation, summarization, and content analysis. Instead of providing captions in one language, the platform delivers real-time text in 75+ languages simultaneously. Instead of producing a raw transcript, it generates searchable, structured content with speaker identification, topic detection, and cross-session synthesis. Having processed 10,415+ sessions across 627+ events, this approach transforms captioning from a compliance checkbox into a content asset that benefits every attendee.

According to EventsAir’s 2026 survey, 95% of event professionals expect their organization’s use of AI to increase, and real-time captioning and transcription are among the most commonly cited AI applications for events.

Related Terms

- CART Services: Communication Access Realtime Translation, the gold standard for live captioning

- Live Event Transcription: The broader practice of converting speech to text in real time

- ADA Compliance for Events: Legal requirements driving captioning adoption

- Simultaneous Interpretation: Real-time spoken translation, complementary to captioning

- Hybrid Event Technology: Technology stack where captioning serves both in-person and virtual audiences

- Virtual Event Platform: Digital platforms with built-in or integrated captioning features

It depends on your event type and organizer status. Government-affiliated events must provide effective communication under ADA Title II, and the April 2026 rule explicitly requires WCAG 2.1 Level AA captioning for digital content. Private events under ADA Title III must provide reasonable accommodations when requested. Virtual events that stream video publicly may fall under FCC captioning rules. Even if not legally required, live captioning is increasingly expected by attendees: 73% expect conferences to use modern event technology (Whova, 2026), and captioning is a core component of that expectation.

There is no universal legal standard for accuracy. CART certification requires 96%+ accuracy. The FCC requires accurate captioning for broadcast content without specifying a percentage. For ADA compliance, captions must provide effective communication, which is judged contextually. As practical guidance: 98-99% accuracy (human CART) is appropriate for attendees who rely on captions as their primary communication mode. 90-95% accuracy (high-quality AI or hybrid) is acceptable for general accessibility. Below 85% accuracy, captions may contain enough errors to be confusing rather than helpful.

Human CART and voice writing work in one language at a time. Multiple languages require multiple providers. AI-powered captioning platforms can process and display captions in dozens of languages simultaneously from a single audio input, either by captioning in the source language and then translating the text, or by performing direct speech-to-text in the target language. This multilingual capability is the primary advantage of AI captioning over human methods for international events.

Three common display methods: dedicated captioning screens (large monitors or projection screens positioned near the stage showing only caption text), caption overlay on presentation screens (captions appear as a text bar at the bottom of the main presentation display), and personal device access (attendees open a web link on their phone or laptop to see captions individually). For accessibility, dedicated screens are preferred because attendees can position themselves for optimal viewing without competing with presentation content.

Live captioning is real-time text generated during a live event. Subtitles are pre-prepared text synchronized with pre-recorded content. Captions are typically verbatim (every word captured). Subtitles may be condensed or simplified for readability. Captions include non-speech information (sound effects, speaker identification, music descriptions) that subtitles may omit. At events, live captioning is the correct term for real-time text during sessions. Subtitles applies to pre-recorded video content shown at the event.